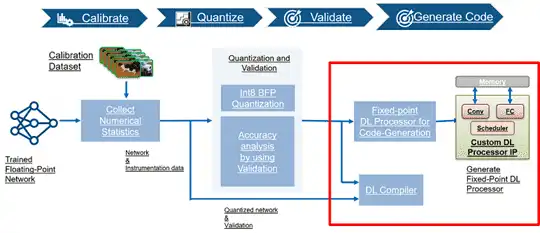

Calibrate, validate, and deploy quantized pretrained series deep learning networks

Increase throughput, reduce resource utilization, and deploy larger networks onto smaller target boards by quantizing your deep learning networks.

After calibrating your pretrained series network by collecting instrumentation data, quantize your series network and validate the accuracy of your quantized network. Once the quantized network has been validated, generate code for and deploy the quantized network.

Functions

Quantization and Validation

dlquantizationOptions |

Options for quantizing a trained deep neural network |

dlquantizer |

Quantize a deep neural network to 8-bit scaled integer data types |

calibrate |

Simulate and collect ranges of a deep neural network |

validate |

Quantize and validate a deep neural network |

Code Generation and Deployment

dlhdl.Workflow |

Configure deployment workflow for deep learning neural network |

dlhdl.Target |

Configure interface to target board for workflow deployment |

compile |

Compile workflow object |

deploy |

Deploy the specified neural network to the target FPGA board |

estimate |

Estimate performance of specified deep learning network and bitstream for target device board |

predict |

Run inference on deployed network and profile speed of neural network deployed on specified target device |

release |

Release the connection to the target device |

validateConnection |

Validate SSH connection and deployed bitstream |